Queuing Theory and the Production Pipeline

Project management is not a hard science and often deals with many aspects that are not well defined. However, applying a bit of science / math can often lead to new insights and more efficient processes. In this post, I will apply a touch of queuing theory to project management.

I do not consider my self an expert in this area. I am a strong agile proponent and a practicing certified scrum master. I am continually learning and improving my understanding. Please read this with a bit of skepticism and provide constructive feedback / debate in the comment section.

Queuing Theory

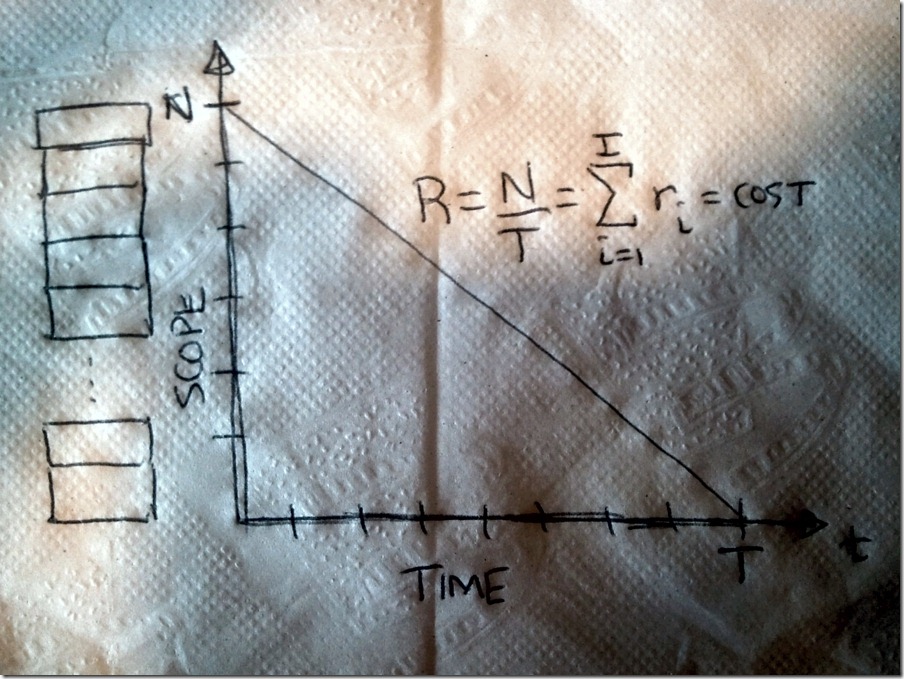

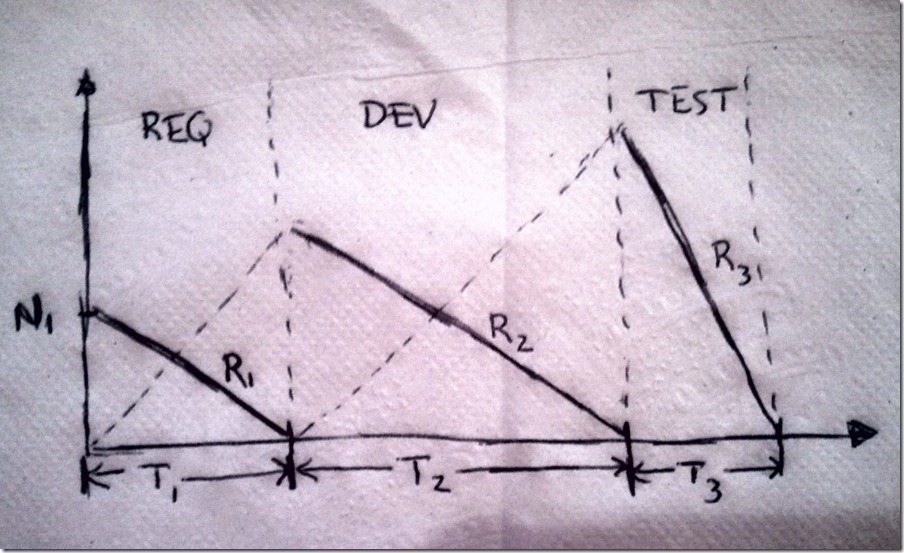

Many times in a project a team will have a list or a queue of tasks they need to complete. This team could be a group of engineers analyzing customer requirements, a few developers implementing the requirements, or a crew of testers. Here is a back of the envelope (or napkin) picture of a queue.

In this case the queue depicts ‘N’ items. These N items define the scope of the work or the ‘back log’. The team will work on the N items and complete them all in ‘T’ time. Many times you will hear the term ‘burn down’ to refer to this activity. The team establishes a consistent rate ‘R’ of burning down queued items. The rate ‘R’ is the sum of all the individual contributor rates ‘r’. The rate ‘R’ is directly proportional to how well funded the project is.

The ‘scope-time-cost’ or ‘good-fast-cheap’ relationships hold. The business can choose only choose two. Therefore:

- If the scope ‘N’ and time ‘T’ are fixed, then the project needs the appropriate funding (resources) to create the desired burn down rate ‘R’.

- If the scope ‘N’ and the resources ‘R’ are fixed, then the queue will be emptied at the time ‘T’.

- If the resources ‘R’ and time ‘T’ are fixed, then the scope ‘N’ is what you can afford.

Risk Management – Queues

Controlling risk is an important activity in project management. There are risks that can impact scope ‘N’, time ‘T’ and burn down rate ‘R’. One of the largest risks that a queue has is that queued items no longer represent customer needs. Items in the queue have a shelf life. Imagine the items represent items to be implemented by a development team. These items originated from customer requirements that have been analyzed and flushed out by the requirements team. What is the shelf life for these items? Is it one year? Six months? Probably not. Many industries move much faster than this. For some industries even a one month shelf life is too long.

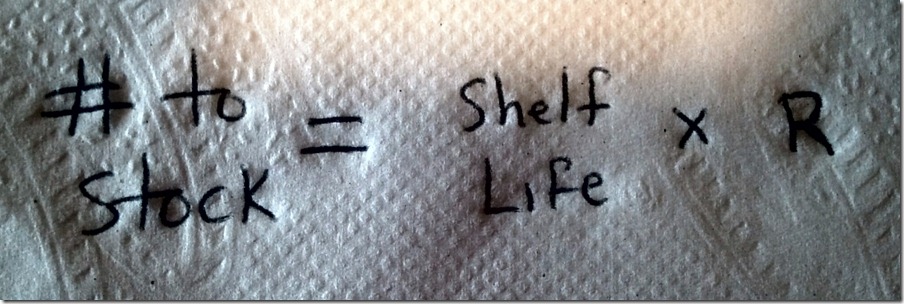

So what can be done to mitigate this shelf life risk? The answer is simple. Keep the queue prioritized and only stock the queue with just enough items that match the capacity of your team.

This equation represents the maximum number of items that can be queued without exceeding the shelf life. The ideal mitigation of this risk is to push this towards a ‘just-in-time’ queuing system. In lean software engineering ‘waste’ is defined as ‘everything not adding value to the customer’. Queued items represent ‘waste’. Just-in-time queues minimize the waste in the system.

Chained Queues

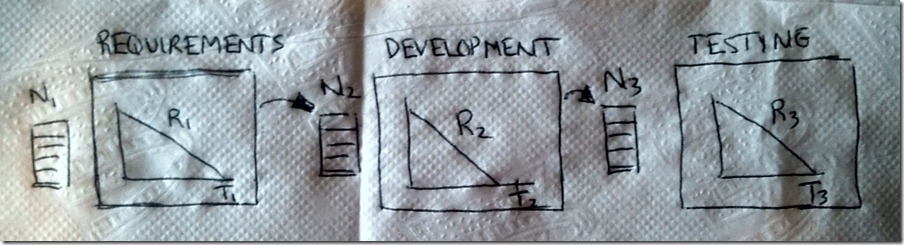

It is very common that the work of burning down items on one queue feeds another queue. This forms a production pipeline that items work their way down. For example here is a three stage queue forming a production pipeline:

Each queue will resource a group of individuals with a specialized skill set. Often the number of items in the down stream queues is larger than the previous queues. For example, a single customer requirement may result in multiple system requirements. One system requirement may have multiple tests to ensure functionality under various conditions. Downstream queues don’t have to wait for upstream queues to complete their backlog before proceeding. In fact, there are benefits to not doing this as we will see.

The number of queues may vary depending upon the specific software development process. For the sake of discussion, I will continue forward assuming the three queues above.

Risk Management – Chained Queues

The final customer does not need to know the details of how many queues exist, what they are and their order. They are concerned with what enters and exits the production pipeline. This customer provides an initial voice (voice of the customer) and expects a product out the other end on time and in budget. The probability of meeting this expectation is inversely proportional to how much time it takes to turn the ‘voice of the customer’ into product. To make things more difficult, customers often change their expectations after the initial requirements have been gathered.

To mitigate this risk, constant communication with the customer is necessary. Expecting change and providing opportunities to change is a common agile principle. This will be discussed further when below.

Managed Flow – Waterfall

The flow of items down the chain of queues (or the production pipeline) can become a problem. An improperly managed pipeline can result in teams either being too far under or too far over capacity. Here is an example of a pipeline where each queue fully empties before the next queue begins.

This type of flow is commonly known as ‘waterfall’ or ‘phased gate’ approach. The ‘requirements phase’ must be completed before the ‘development phase’ begins and so on. This can lead to a down stream queues that rapidly exceed their shelf life and resource capacity. The items flow down the pipeline in a big lump. Resource consumption (capacity management) is also lumpy.

An area of ‘friction’ in the pipe often exists around the ‘hand off’ areas. The requirements engineers have been analyzing and producing system requirements for months. The shear number of system requirements cannot easily be provided to the developers in a clear and concise manner.

The customer is provided an inspection opportunity when the final queue is empty (T1 + T2 + T3). That is, when the final product is completed. At this time, change is the most expensive.

Managed Flow – Agile

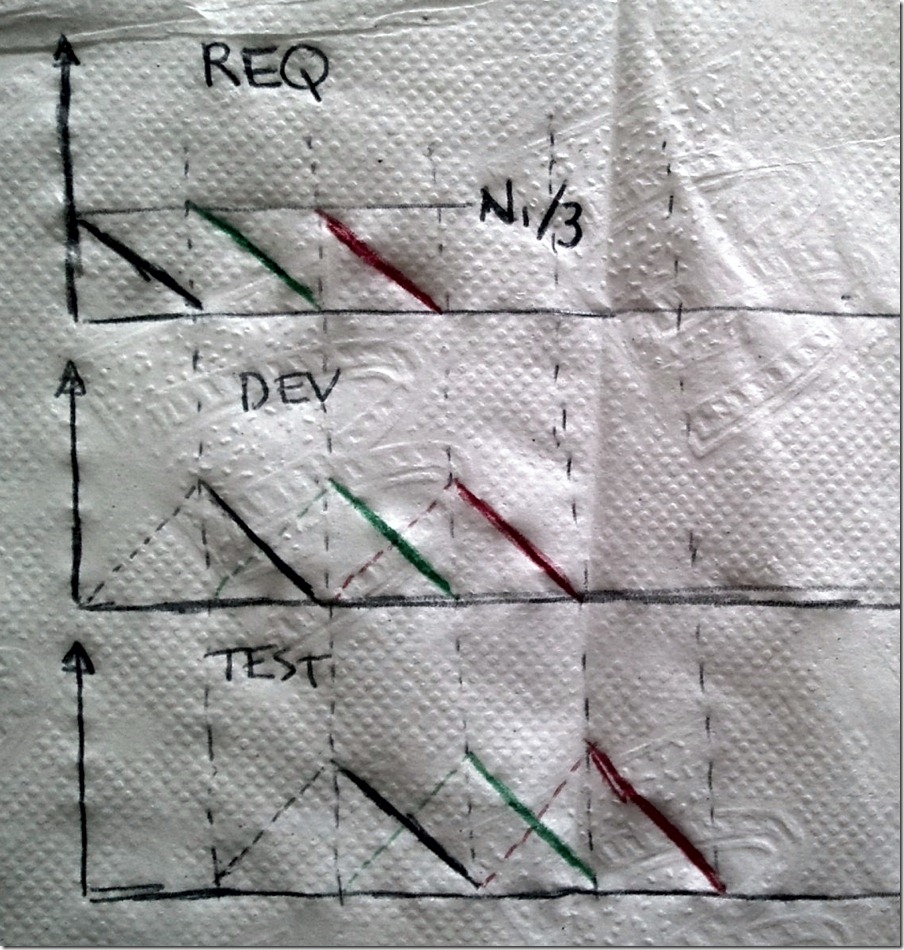

A different way to manage the pipeline flow is to develop the product iteratively using a regular cadence. During each interval a po

rtion of the original requirements are developed as fully as possible. Here is a pictorial of this scenario where the original requirements are broken down into thirds:

In this scenario, the customer (or a customer representative) prioritizes the full backlog of N1 items. The requirements group then pulls the top one-third (N1/3) items, analyzes and create system requirements. The difference is that the developers do not wait until all N1 requirements are analyzed. They only wait for the first one-third.

In this case, one-third was taken as an example. Most of the time the pipeline team (requirements + devs + testers in this case) determine a maximum queue capacity or a work in progress (WIP) limit. The team then pulls from the original requirement just enough to fill the queue. This allows the items in each queue to be processed before their shelf life expires and guarantees the capacity of the individual teams will not exceed their maximum.

In the above image, the flow along the pipeline can easily be visualized. Now instead of one large lump of items, there are many smaller lumps. Each lump still traverses the full pipeline. The pipeline flow and resource consumption have a very regular cadence. The cadence is typically set be establishing a time limit (time box) for each interval. That in turn determines the WIP limit. Having a regular cadence allows resources (capacity) to be managed more effectively.

The work exiting the pipeline is an incremental improvement to the product. These incremental improvements allow the product to evolve and until a shippable version of the product exists. These incremental improvements also provide inspection points. The customer has the opportunity to provide feedback at a regular interval. This promotes constant communication and provides a mechanism for the customer to introduce change. This mitigates the cost of change by allowing the customer to ‘steer the boat’ early and often.

Cooperative Flow

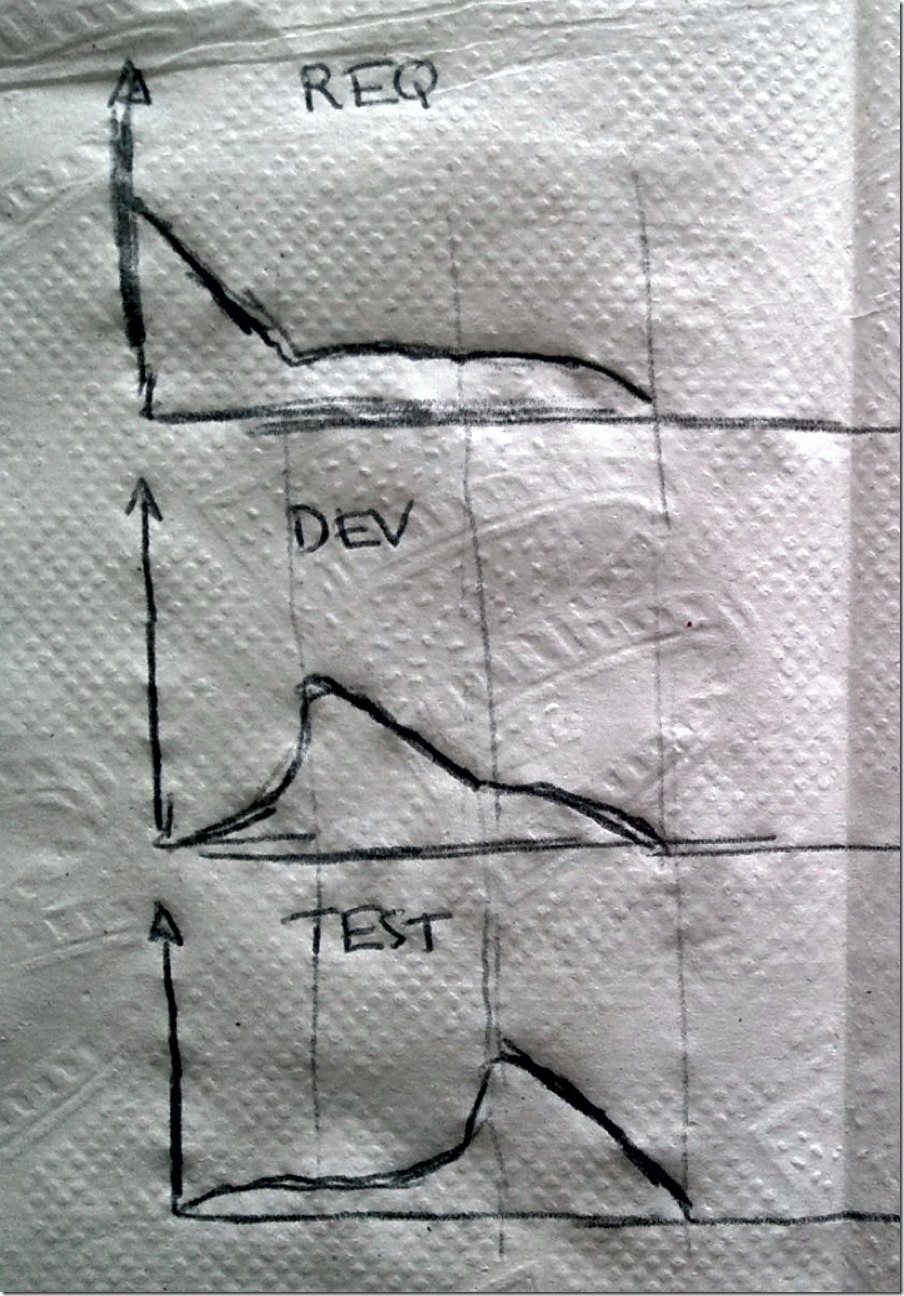

The above scenario depicts the development team accepting a queue from the requirements team without any overlap. In reality, there is generally some overlap as the development team ‘comes up to speed’ with the new items. Similarly, the testing group will likely need some development (and probably some requirements) time to create a comprehensive test plan. The ‘burn-down’ for any given iteration will likely be something as follows:

The requirements engineers are at full capacity during the period where heavy analysis is occurring. However, there is some additional draw on the requirements engineers during the development and testing phases. Likewise the other teams will likely have some draw upon their resources during all times with a spike during the period when the items are in their queue.

The requirements engineers are at full capacity during the period where heavy analysis is occurring. However, there is some additional draw on the requirements engineers during the development and testing phases. Likewise the other teams will likely have some draw upon their resources during all times with a spike during the period when the items are in their queue.

Having the full team (representatives from each queue) resourced for the full iteration is beneficial to the final quality of the product. For example, during what is normally considered ‘requirements engineering’ (or when most of the items are queued in the requirements backlog) developers can provide insight into an implementation detail that may effect the system requirement. Likewise, a test engineer can help ensure the system requirement is testable. During the primarily ‘development phase’ the requirements engineers can provide further clarity and the test engineers can help promote testable code.

This cooperation flow leads to a better product. Miscommunication that leads to rework is averted early. The final product better represents the original customer vision. The code base is better able to be tested (hopefully automatically).

Summary

Visualizing the software development process as a pipeline of queues offers a model that allow queuing theory to be applied. This provides many insights into how to manage capacity and mitigate risk. The model developed above is a bit ideal and will surely not fit every situation. This model provides a fundamental base. This model can help explain the benefits / costs of the various project management methodologies (waterfall, scrum, lean). As always, I would love to hear what you think in the comments.

Hiya Shirley Babb here, I’d been simply looking for info about Queuing Theory and the Production Pipeline | Random Sparks online. So happy of having reach https://bobcravens.com/2011/01/queuing-theory-and-the-production-pipeline to learn about this knowledge. Was able to save myself alot of time, thanks.